How does the meaning of everyday objects change in the age of AI? The same chair can be touched, photographed, and described in words and then you can ask an AI to generate an image from that description. Where does reality get lost in this process, where do new meanings emerge, and why can AI be so confidently wrong?

In this workshop we’ll run a hands-on experiment inspired by Joseph Kosuth’s One and Three Chairs: we’ll compare the object, a photo, a text description, and an AI-generated image as different forms of representation. You’ll describe an object and see how AI interprets your description, try out generative tools, and observe how image-analysis services label and classify your photo.

What we’ll do:

- a short introduction: why AI “sees” differently from humans

- an exercise: observing and describing an object

- image generation

- checking the “machine gaze”: labels, detected objects, and confidence scores

- discussion: how platforms, captions, and algorithms shape context and expectations

You’ll leave with:

- an understanding of how words, images, and objects relate in an AI-driven media environment

- the skill to critically read AI descriptions and “confident” labels

- practical experience with prompts and image analysis through real examples

Bring: a smartphone (camera + internet).

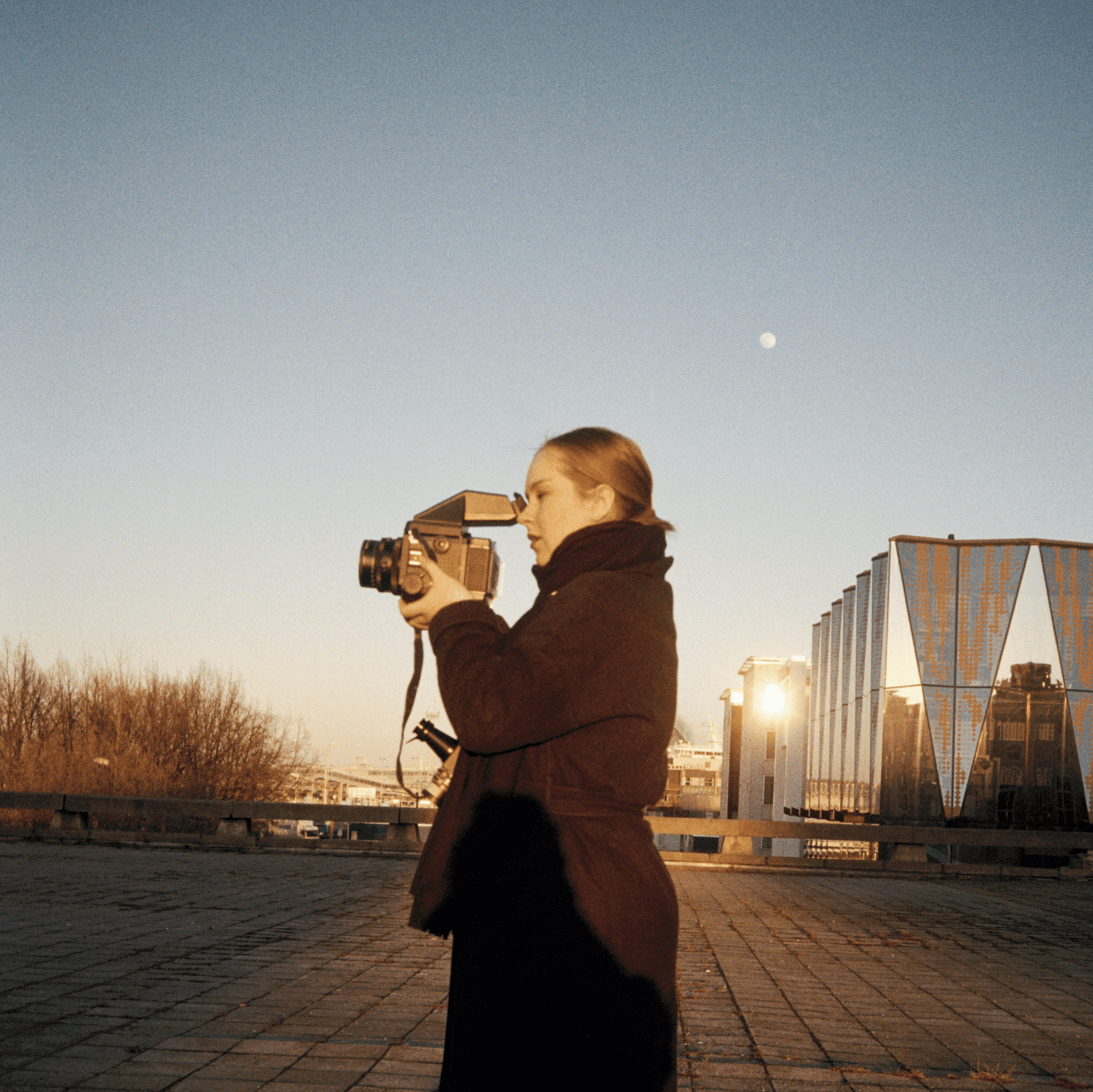

Anna-Maria Vaskovskaja is a photographer and media literacy specialist. With over 13 years of experience in visual arts and photography, she focuses on how visual content shapes perception and how it is interpreted. In her trainings, she helps participants develop the skills to assess the reliability of information, recognise manipulation, and understand the role of artificial intelligence in what we see on digital platforms and how we interpret it.

Workshop is conducted in Russian